Most AI-generated marketing content doesn’t sound bad. It sounds fine—and that’s the problem.

If your LinkedIn posts, landing pages, nurture emails, and “thought leadership” keep drifting into the same casual-professional groove (“Let’s talk about…”, “In today’s fast-paced world…”, “Here are 5 tips…”), you’re seeing a predictable system behavior—not a creative failure.

The fix isn’t “better prompts.” It’s standardizing your content decisions and then letting AI automate the writing within those constraints.

Quick definitions (so we’re talking about the same thing)

AI content sameness: when AI-generated content converges on the same phrasing, structure, and claims—so it could plausibly be published by any brand in your category.

Brand voice calibration: conditioning AI output on your writing and rules (examples, style guidelines, do/don’t lists, approved claims) so drafts match your tone and editorial standards—not the internet’s defaults. This can be done via strong prompting, retrieval-augmented generation (RAG), style embeddings, or fine-tuning depending on risk and scale.

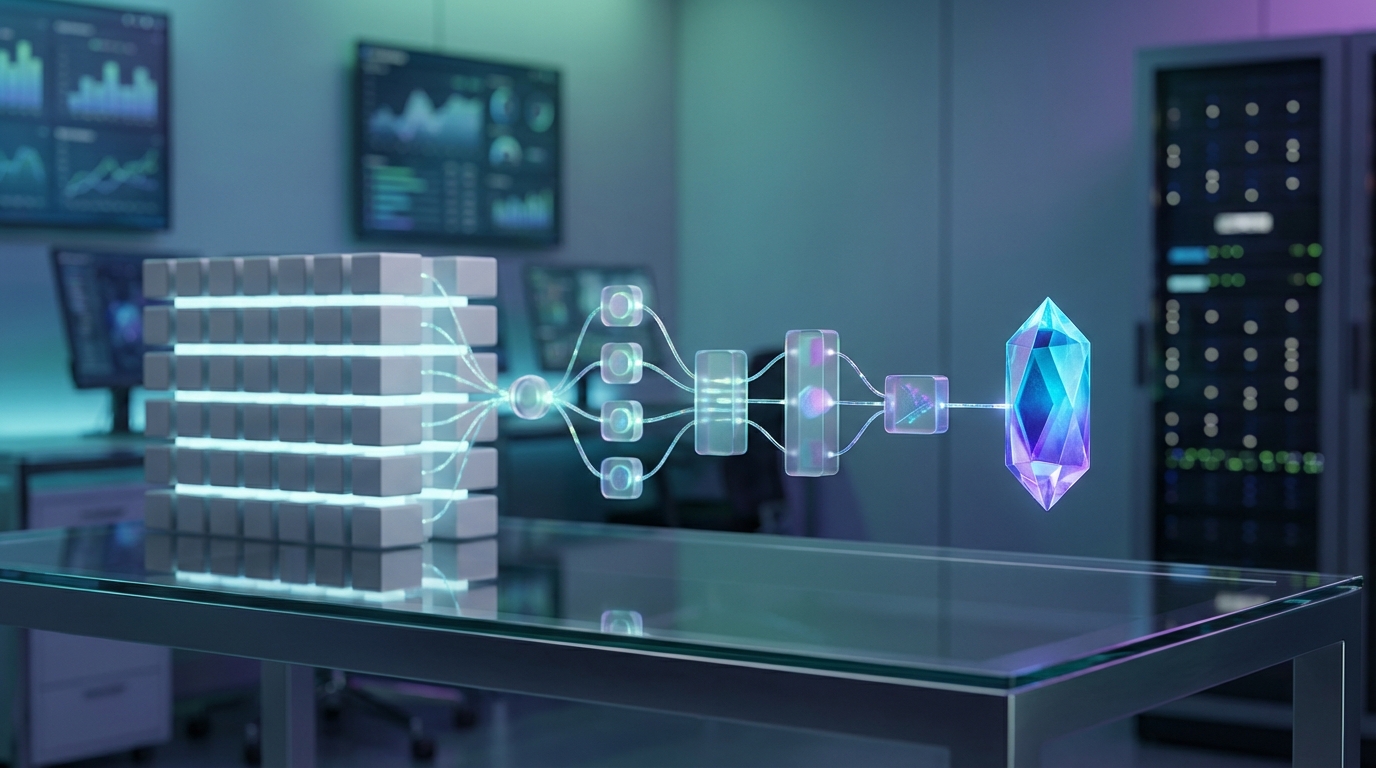

Multi-model pipeline: a workflow where different steps (briefing, drafting, voice QA, claim QA, formatting) are handled by purpose-built prompts/models/tools instead of asking one model to “do everything” in one pass.

Verified AI content: content produced with documented checks (voice adherence, claim support, source/provenance, approvals) so you can defend what you published.

Answer engine optimization (AEO): structuring content so answer engines and AI-powered search experiences can extract clear, direct answers (good headers, definitions, summaries, consistent entities). It’s adjacent to SEO, but optimized for “answer selection” behaviors.

How it works (pipeline at a glance)

Inputs → Brand corpus + style guide + creative brief + allowed proof

Process → Generate variants → voice check → claim check → legal/compliance (if needed) → format for channel

Outputs → On-voice draft + claim inventory + citations/links + approval log

Symptoms: what “AI-generated content sounds generic” looks like in the wild

If 4+ of these show up repeatedly, you have an AI content sameness problem:

- Cliché openers (“Let’s unpack…”, “Here’s the thing…”, “In today’s world…”)

- Predictable structure (hook → 3 bullets → soft CTA; no real argument)

- Claims without proof (“boosts engagement,” “drives results,” “streamlines workflows”)

- No trade-offs (everything is upside; no boundaries, risks, or constraints)

- Interchangeable nouns (“platform,” “solution,” “customers,” “teams”) instead of your domain vocabulary

- Polite, low-friction tone that avoids a point of view

- Same cadence everywhere (LinkedIn sounds like a landing page sounds like an email)

Why AI content converges on the same voice (3 root causes)

Root cause #1: Models regress toward common patterns

General-purpose language models are trained on large, mixed datasets (web text, books, code, and other curated/licensed sources). When you ask for marketing content, the model often recombines high-frequency patterns it has seen before—especially the patterns that look like “successful marketing writing.”

That’s why you get the same:

- Openers: “Let’s talk about…”, “Here’s the thing…”, “If you’ve ever wondered…”

- Structure: hook → bullets → CTA

- Tone: confident-but-safe, friendly-but-bland

Several practitioners describe this as a homogenization effect tied to training data and common prompt habits (see The Homogenization Problem: Why AI-Generated Marketing All Sounds the Same and AI Writing and the Problem of Sameness).

Key takeaway: If your input is generic, the output will typically drift toward what “average marketing” looks like.

Root cause #2: Your prompt is a format request, not a spec

The most common B2B prompt is some version of:

“Write a professional LinkedIn post about X.”

That’s not direction. It’s a container.

Without deep context (your POV, proof, audience sophistication, taboos, constraints), the model fills in the gaps with the most likely defaults. Practitioner commentary often points to shallow prompts and thin context windows as a driver of sameness, and to richer briefs + iteration as the differentiator (see Can AI's Sameness Problem Be Solved? This Founder Thinks So).

On volume: Campaign reports 71.7% of new content is produced with a human–AI mix (per its reporting; definitions and sampling matter). More content produced faster can increase the surface area of generic patterns unless you put constraints in place (see Beyond the Hype: Why Most AI Projects Fail and How to Get It Right).

Key takeaway: Treat prompts like specs. If your spec doesn’t include brand constraints and proof, the model can’t reliably invent them.

Root cause #3: Single-pass workflows + default safety tuning smooth out edge

Most teams run:

- Generate draft

- Light edit

- Publish

That optimizes for speed, not distinctiveness.

Two forces push single-pass output toward generic:

- No rewrite loop: Distinct voice usually emerges through rewriting—tightening claims, choosing sharper examples, cutting filler.

- Default safety tuning: Many mainstream deployments are tuned to avoid risky claims and polarizing language. That broad acceptability can flatten humor, bite, and specificity.

Agency leaders have flagged the same pattern: grammatically clean output that still feels emotionally flat (see The AI Problem in Agencies: Why Originality is Becoming a Premium Skill Again).

Key takeaway: If you want memorable, you need iteration. First drafts tend to be safe.

The core insight: standardize decisions → then automate writing

The fastest way to de-generic your AI output is to stop asking AI to “be creative” and start asking it to follow your decision rules.

Before you scale production, standardize:

- What you believe (POV)

- What you can prove (evidence)

- What you refuse to sound like (voice boundaries)

- What you won’t claim (risk constraints)

Once those decisions are explicit, automation becomes an advantage instead of an averaging machine.

Fix checklist (snippet-friendly): 10 steps to reduce AI content sameness

- Collect a brand corpus (20–50 strong samples)

- Label what “good” means (tone, structure, proof, audience level)

- Write voice boundaries (10 no-go phrases + 10 signature moves)

- Create a one-page creative brief (POV, proof, CTA, funnel stage)

- Generate multiple hooks and outlines (selection pressure)

- Draft with constraints (channel format + brand rules)

- Score voice adherence (human rubric + automated checks)

- Verify claims (claim inventory + evidence links + required caveats)

- Run governance (legal/compliance review when needed)

- Publish in AEO-friendly structure (direct answers, definitions, consistency)

What distinctive AI content looks like (and how to score it)

Generic LinkedIn post

Let’s talk about content marketing automation.

In today’s fast-paced world, marketers need to create more content in less time. Automation can help streamline workflows, improve consistency, and drive better results.

Here are 3 ways to get started:

- Audit your process

- Use templates

- Track performance

What’s your biggest challenge with content?

Distinctive LinkedIn post

Most “content marketing automation” fails for one reason: teams automate the writing before they standardize the decisions.

If you can’t answer these three questions consistently, automation will just scale inconsistency:

- What do we believe that others don’t? (POV)

- What proof do we use when we make a claim? (evidence)

- What do we refuse to sound like? (voice boundaries)

Build those rules once. Then automate distribution, repurposing, and QA.

Speed isn’t the advantage. Coherence is.

A quick “Sameness Score” rubric (10 points)

Score each draft 0–10. If it’s 6+, it’s too easy for someone else to ship.

- Cliché opener present (1)

- Generic urgency (“today’s world,” “fast-paced”) (1)

- Vague benefit claims with no mechanism (1)

- No proof or example (1)

- No trade-off / no boundary (1)

- Interchangeable nouns dominate (1)

- Template structure with no argument arc (1)

- “Soft CTA” question ending by default (1)

- No domain-specific terms your buyers use (1)

- Could be posted by a competitor with zero edits (1)

Example scoring (rough):

- Generic post: 8–9/10 (hits most failure modes)

- Distinctive post: 2–3/10 (clear stance + framework + boundary language)

The practical fix: a brand-calibrated, multi-model pipeline

If generic inputs create generic outputs, the fix is a better system—one that forces specificity.

Two approaches come up repeatedly in discussions of sameness:

- Brand voice calibration (conditioning on your corpus and rules) (see The Homogenization Problem: Why AI-Generated Marketing All Sounds the Same)

- Iteration and selection (variants + refinement instead of first-pass publishing) (see Can AI's Sameness Problem Be Solved? This Founder Thinks So)

Some vendors are cited as examples of using existing brand content to drive more unique output in that AIJourn piece; treat that as one implementation path, not the only one. Mechanism options include:

- RAG (retrieval-augmented generation): the model pulls from your approved corpus at generation time

- Style-anchored prompting: system prompts + exemplars + explicit do/don’t rules

- Fine-tuning: training a model variant on your content (higher effort; higher governance needs)

Pipeline artifacts (make the system tangible)

Below is a practical step-by-step with inputs/outputs. This is what “running AI like production” looks like.

Step 0: Brand corpus preparation (20–50 examples)

Goal: give the system a clean target to imitate.

How to select your corpus:

- Pick assets that performed and read like your best thinking (not just high traffic)

- Include a mix: 5–10 landing page sections, 5–10 emails, 5–10 LinkedIn posts, 5–10 blog excerpts

- Avoid one-off experiments you don’t want to repeat

How to clean/label:

- Remove outdated offers, wrong positioning, deprecated features

- Label each sample with: channel, audience level, tone notes, and “why this is good”

If you don’t have strong writing yet: start by curating 10–15 founder/SME interviews, sales call snippets (approved), and internal POV memos. Your “voice” can be built from how you speak—then editorialized into publishable form.

Templates you can copy/paste

1) One-page creative brief (template)

Asset type/channel: (LinkedIn post / landing page section / email)

Target reader: (role, seniority, context)

Job-to-be-done: (what they’re trying to accomplish)

Your POV (non-obvious belief):

Enemy / anti-pattern you’re calling out:

Mechanism (how it works):

Proof allowed: (case metric, internal data, public source, SME quote)

Claims we will not make: (compliance/risk constraints)

CTA: (demo, download, reply, book, etc.)

Funnel stage: (awareness / consideration / decision)

Voice notes: (formality, humor, sentence length, taboo phrases)

2) Voice boundary list (filled example for a fictional B2B analytics company)

No-go phrases/tropes (we don’t ship these):

- “In today’s fast-paced world”

- “Game-changer”

- “Unlock (value/insights)”

- “Leverage” as a filler verb

- “Seamless” (unless we specify what’s seamless and why)

- “Revolutionize”

- “Drive results” without naming the result

- “Cutting-edge”

- “Boost productivity” without a mechanism

- “Let’s unpack” / “Let’s dive in” openers

Signature phrases/moves (we want these patterns):

- “Here’s the decision you need to standardize:”

- “If you can’t explain it in one sentence, you can’t operationalize it.”

- “Mechanism > motivation.”

- Use numbers when possible (ranges, thresholds, SLAs)

- Define terms in plain English (“By X, we mean…”)

- Name trade-offs explicitly (“You gain X; you give up Y.”)

- Use concrete nouns (pipeline stages, objects, documents)

- Prefer short sentences for claims; longer sentences for nuance

- Call out anti-patterns with specifics (“prompt libraries without briefs”)

- End with an operational next step (timeboxed)

3) QA checklist (voice + claims)

Voice QA:

- No no-go phrases

- Contains one clear POV sentence

- Uses our domain nouns (not “platform/solution” everywhere)

- Includes a trade-off or boundary

- Reads like one person wrote it (consistent cadence)

Claims QA:

- List every claim that implies performance, savings, or outcomes

- For each claim: attach proof (link, dataset, customer quote, internal metric) or add a caveat

- Remove implied universals (“always,” “guarantees”) unless legally approved

- Confirm product statements match current capabilities

Minimal viable pipeline (ship in 2 weeks)

You don’t need a full platform rebuild. You need a repeatable workflow with owners and acceptance criteria.

Scope

- One channel (pick LinkedIn or email—don’t start with “everything”)

- One content type (e.g., weekly POV post or one nurture sequence)

Owners

- Marketing lead (owner): defines POV and approves final

- Subject-matter expert (SME): approves technical accuracy

- Editor/content strategist: enforces voice boundaries + structure

SLA (example)

- Brief: 30 minutes

- Variant generation: 30–60 minutes

- Review + QA: 60–90 minutes

- Publish-ready within: 2 business days

Acceptance criteria

- Sameness Score average ≤ 3/10 across top 3 drafts

- 100% of non-trivial claims have proof or a caveat

- Voice boundary violations: 0

Mature pipeline (6–8 weeks): governance, tooling, and scale

What changes at maturity

- Governance is explicit: what needs legal review, what doesn’t

- Provenance exists: you can trace claims to sources

- QA is measurable: voice adherence and defect categories are tracked

Recommended roles

- Content ops / program manager: runs workflow, maintains corpus and templates

- Legal/compliance partner: defines review triggers + response SLA

- Product marketing: owns positioning, claims library, and competitive framing

- Analytics/ops: tracks voice score, revision cycles, and performance metrics

Common SLAs (set these or you’ll stall)

- Legal review for gated assets: 3–5 business days

- SME review: 2 business days

- Editorial QA: 1 business day

Tooling patterns (platform-agnostic)

- Central corpus repository (versioned)

- Claims library (approved statements + required caveats)

- RAG or retrieval layer for “approved language” reuse

- Automated checks (no-go phrase scanning, similarity checks, reading level)

Measurement: how to quantify sameness and voice adherence

You need two layers: a human rubric (for judgment) and automated checks (for scale).

Baseline metrics to capture (week 0)

- Sameness Score (0–10): average per asset type

- Voice adherence (0–5): editor rating (cadence, POV, vocabulary, boundaries)

- Evidence density: # of supported claims / total claims

- Revision cycles: number of stakeholder review rounds

- Time-to-publish: hours from brief to final

Target metrics (practical, not magical)

- Reduce Sameness Score by 30–50% on your primary channel

- Cut revision cycles by 1 round by making claims + voice rules explicit

- Increase evidence density to >80% supported claims for top-funnel assets; ~100% for product and performance claims

Automated checks you can run today

- Phrase blacklist hits (no-go phrases)

- Similarity against your last 50 posts (n-gram overlap or embedding similarity)

- Claim detection heuristics (flag percentages, superlatives, “increase/boost/drive”)

Governance and risk (how to prevent hallucinated claims)

If you’re using AI in marketing, the failure mode isn’t “a typo.” It’s a confident, wrong claim.

Minimum governance that works:

- Claim inventory required for any asset with performance language

- Source/provenance attached (link to internal doc, public report, or approved customer quote)

- Legal triggers defined (regulated industries, pricing, guarantees, comparative claims)

- Escalation path (who can say “no,” and what happens next)

This aligns with the broader warning that mindless AI usage can erode brand voice and trust signals (see How Mindless Use of AI Content Undermines Your Brand Voice).

Channel-specific guidance (what changes by format)

- Constraint: attention is scarce; claims need to be crisp

- What works: POV + mechanism + one concrete example

- Risk: overgeneralized advice; default “engagement bait” questions

Landing pages

- Constraint: legal/product accuracy and conversion clarity

- What works: proof blocks, explicit differentiation, controlled vocabulary

- Risk: inflated claims (“guarantee,” “best-in-class”) and vague benefits

Email (nurture)

- Constraint: relationship and relevance over volume

- What works: one idea, one next step; consistent sender voice

- Risk: sounding like every other sequence (“quick question,” “just circling back”)

Where AEO fits (keep it practical)

AEO isn’t a magic lever—but structure and clarity do increase the likelihood that your content is extractable and consistently summarized.

In pipeline terms, AEO is the distribution formatting layer:

- Use question-based headers

- Put the direct answer in the first 1–2 sentences

- Define key terms once, consistently

- Keep your entities consistent across assets (product names, concepts, stages)

The strategic point: automation increases volume, volume increases repetition, and repetition can push you into sameness. AEO-style structure helps, but it works best when paired with brand voice calibration and claim verification.

Anti-patterns we see (and how to fix them)

- Prompt libraries without briefs → Fix: require the one-page creative brief before generation

- Tone adjectives instead of examples (“confident, friendly”) → Fix: provide 20–50 on-brand samples and a boundary list

- No claim inventory → Fix: add a claim QA step with proof/caveat requirements

- One-pass publishing → Fix: generate variants, then select and rewrite

- No ownership (“AI wrote it”) → Fix: assign a DRI for POV, accuracy, and final approval

Mini case: single-pass vs. pipeline (real-ish B2B scenario)

Scenario: Mid-market B2B SaaS launching a new “pipeline visibility” feature. Goal: 1 landing page section + 1 LinkedIn post.

Single-pass workflow

- Time-to-first draft: 10 minutes

- Review rounds: 3 (PMM, product, legal)

- Issues found: vague claims, mismatched product capabilities, generic tone

- Outcome: publish slips by ~1 week due to back-and-forth

Pipeline workflow (MVP)

- Brief (PMM): 30 minutes

- Corpus pull + boundaries (editor): 60 minutes (one-time setup)

- Variants: 10 hooks + 3 drafts: 45 minutes

- Voice + claims QA: 60 minutes

- Review rounds: 1–2 (because claims and boundaries are explicit)

Outcome delta (typical, not guaranteed): faster approvals and fewer “rewrite from scratch” moments because stakeholders are reacting to clear decisions, not vibes.

Conclusion: sameness is predictable—and you can engineer it out

AI content sameness comes from three things working together: common pattern regression, thin specs, and single-pass workflows. If you want distinctive output at scale, you need to standardize decisions and run a pipeline that enforces them.

Your next step (timeboxed)

Within 5 business days, pick one channel and run this experiment:

- Write a one-page creative brief (use the template above)

- Build a voice boundary list (10 no-go + 10 signature)

- Generate 10 hooks and 3 drafts

- Score each draft with the 10-point Sameness Score

- Run claim inventory + proof/caveat check

Acceptance criteria: publish only drafts that score ≤ 3/10 and have documented support for non-trivial claims.

FAQ

What is brand voice calibration?

Brand voice calibration is the practice of constraining AI output using your existing writing, your style guide, and explicit do/don’t rules so drafts match your editorial standards. Depending on your needs, you can do this with RAG, style-anchored prompting, embeddings, or fine-tuning.

What is a multi-model pipeline?

A multi-model pipeline is a workflow where different steps—briefing, variant generation, drafting, voice QA, claim QA, and channel formatting—are separated so each step can be optimized and audited.

How do you measure brand voice consistency?

Use a combination of (1) a human scoring rubric (voice adherence, POV clarity, specificity) and (2) automated checks (no-go phrases, similarity vs. prior posts, claim detection). Track baselines and set explicit targets by channel.

Does sameness impact performance?

Generic content often blends into the feed and can weaken perceived differentiation. Practitioners also warn that when creative looks interchangeable, results can suffer (see AI Content Creation Challenges | What Marketers Should Know). Treat it as a measurable hypothesis: run A/B tests on on-voice vs. generic variants and compare CTR, CVR, and qualitative feedback.

Sources/References

- The Homogenization Problem: Why AI-Generated Marketing All Sounds the Same

- Can AI's Sameness Problem Be Solved? This Founder Thinks So

- The AI Problem in Agencies: Why Originality is Becoming a Premium Skill Again

- AI Writing and the Problem of Sameness

- Beyond the Hype: Why Most AI Projects Fail and How to Get It Right

- AI Content Creation Challenges | What Marketers Should Know

- How Mindless Use of AI Content Undermines Your Brand Voice